Across the world, communities are already living with the consequences of climate change and environmental degradation. Farmers face unpredictable rains. Coastal communities confront rising seas. Forest-dependent peoples see their livelihoods erode as ecosystems decline. In response, development agencies, international financial institutions, NGOs, and others are scaling up efforts that aim — often simultaneously — to reduce poverty, strengthen resilience, protect ecosystems, and contribute to global climate goals.

Yet a persistent challenge remains: how do we know whether these investments are delivering results that last in a world of environmental limits?

Evaluation plays a powerful — if often underestimated — role in answering that question. It shapes what is considered effective, what gets funded and scaled, and what is ultimately abandoned. Yet most evaluations still focus narrowly on short-term social or economic results, paying scant attention to environmental sustainability.

This is no longer sufficient. In an era defined by climate change, biodiversity loss, and resource scarcity, evaluation must move beyond linear models and adopt a systems perspective that recognizes the interdependence of human and natural systems. Evaluation is not just a technical exercise; it helps identify what works, for whom, under what conditions — and whether success today may undermine sustainability tomorrow.

Paradoxically, however, many development evaluations continue to treat the environment as a backdrop rather than a central actor. Projects are assessed primarily for how well they deliver intended social and economic benefits, while environmental effects — positive or negative — are relegated to side notes or omitted altogether. If development interventions are to be sustainable, this must change.

Development does not happen in a vacuum

Two simple premises should guide any evaluation at the environment–development nexus. First, every intervention takes place in a specific social, economic, political, and ecological context that shapes its chances of success. Second, virtually all human activity affects the natural environment, whether intentionally or not. Agriculture, infrastructure, energy, water supply, and even social programs leave environmental footprints.

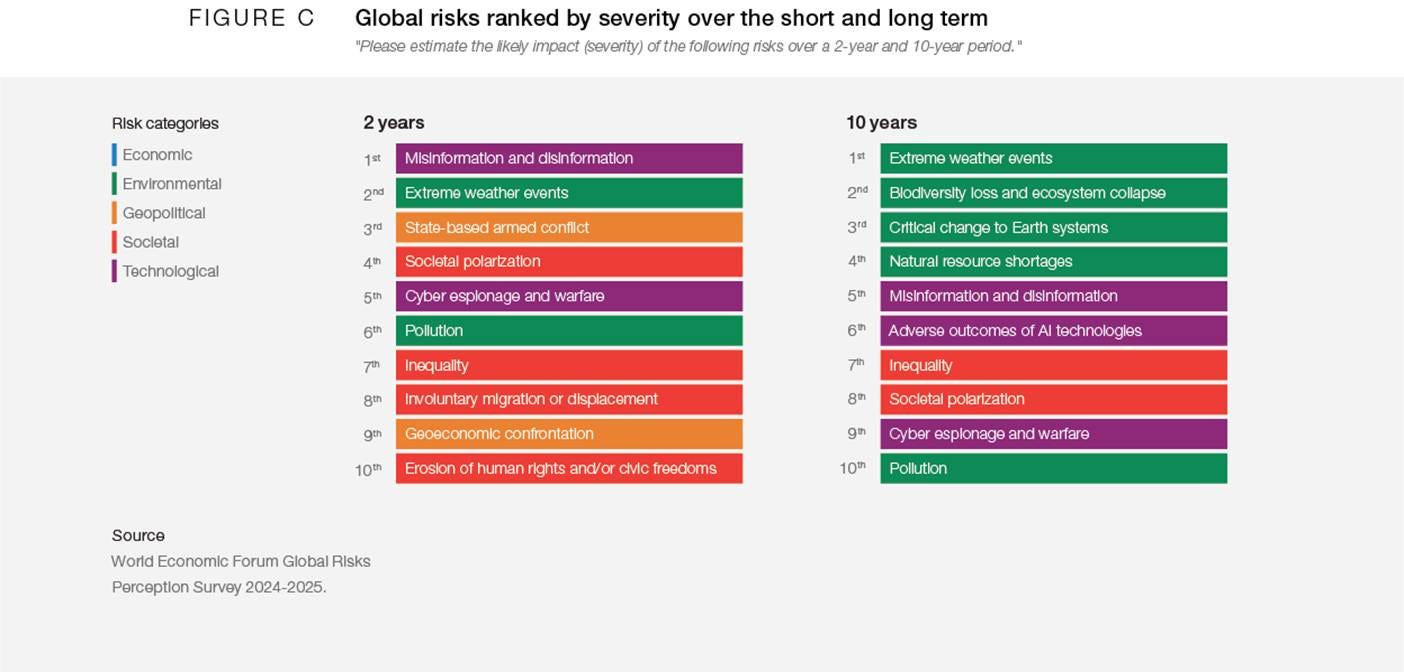

The World Economic Forum’s Global Risks Report 2025 captures a sobering reality: environmental risks now dominate the medium- and long-term outlook. Climate extremes, ecosystem collapse, water stress, and pollution are increasingly shaping humanity’s trajectories. These risks interact with inequality, food insecurity, fragility, and conflict, multiplying their effects and complicating policy responses.

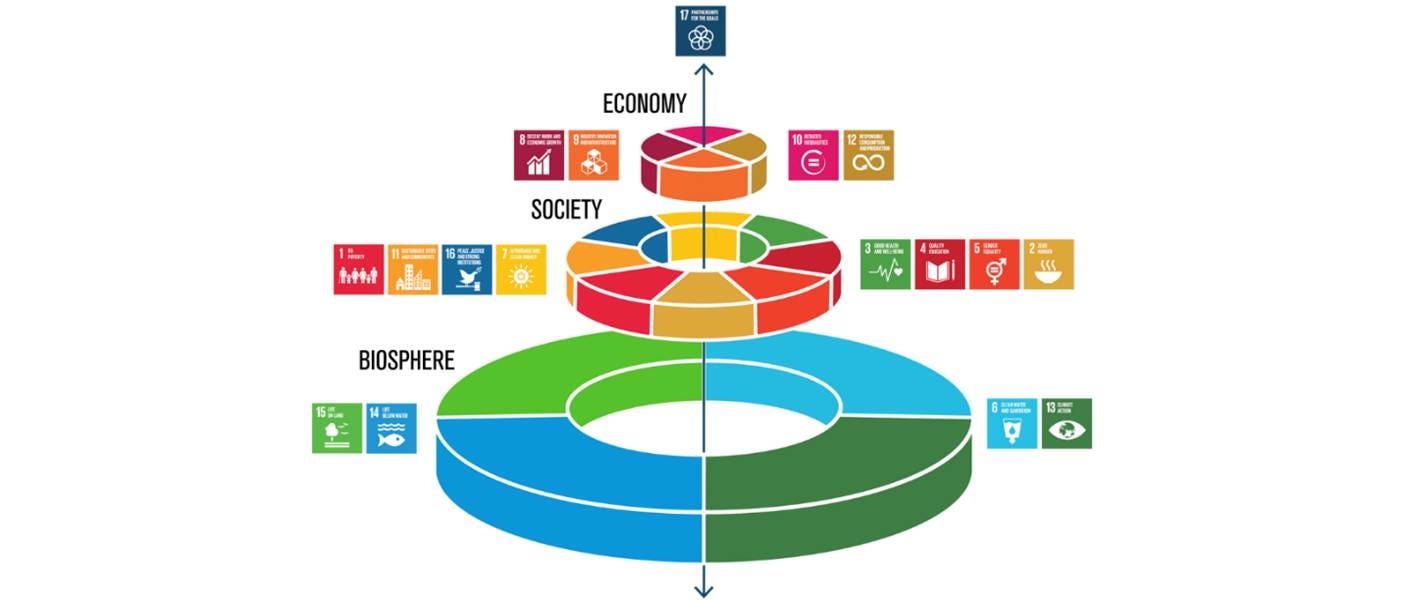

The Sustainable Development Goals (SDGs) explicitly recognize this interdependence. The now-familiar SDG wedding cake offers a useful reminder: the biosphere is the foundation upon which societies and economies rest. Without stable ecosystems, neither poverty reduction nor inclusive growth can be sustained. For policy-makers and evaluators alike, this has a clear implication: assessing development outcomes without assessing environmental sustainability is no longer credible.

Yet in practice, progress remains fragmented. Social and economic goals often advance at the expense of environmental ones, despite growing evidence that environmental degradation ultimately undermines development gains. As a result, development portfolios may deliver short-term gains while inadvertently increasing long-term vulnerability.

This matters because humanity has already crossed several planetary boundaries — the safe operating space for Earth systems. These include biosphere integrity, biogeochemical flows, novel entities, and climate change. Development interventions that fail to account for these dynamics risk solving one problem while worsening another.

A gap between ambition and practice in evaluation

Despite growing awareness, evaluation practice has struggled to keep pace. Reviews by evaluation networks show growing demand for sustainability-informed evaluations. Both project proponents and intended beneficiaries want evidence that interventions are not only effective today, but durable tomorrow. However, environmental dimensions remain underrepresented in most evaluations.

Two factors stand out as explaining this gap. The first is scope. Many evaluations (and evaluation commissioners) define their evaluand narrowly, focusing on project activities and short-term outcomes while ignoring broader environmental interactions. The second is capacity. Evaluators are usually trained in social science methods but lack confidence or expertise in environmental and ecological analysis.

Traditional project models and evaluation approaches rely on linear theories of change: inputs lead to outputs, which lead to outcomes and impacts. Such models struggle to capture the feedback loops, thresholds, and unintended effects characteristic of complex development contexts.

Seeing development as a coupled human–natural system

To address this gap, evaluation must embrace a systems perspective — often described as coupled human and natural systems (CHANS) or social-ecological systems (SES). This approach recognizes that humans are not separate from nature; we are embedded within it, constantly interacting with ecological processes in dynamic and adaptive ways. It recognizes that development interventions operate within dynamic systems shaped by policies, markets, institutions, and ecological processes.

The intellectual roots of this perspective are perhaps best associated with Elinor Ostrom, Nobel laureate in economics. Ostrom challenged the idea that shared natural resources inevitably lead to overexploitation — the so-called tragedy of the commons. She demonstrated that communities can develop governance arrangements that manage resources sustainably, provided institutions align with ecological realities.

For evaluation, this means examining not only what interventions do, but how they interact with broader systems, and how those systems respond. For evaluation, this insight is profound. It means that social outcomes, economic incentives, governance structures, and environmental processes must be analyzed together. Change in one domain often triggers unintended effects in another, creating feedback loops that can either reinforce or undermine sustainability.

Evaluations that focus narrowly on income generation, yields, or service delivery without examining environmental sustainability provide an incomplete — and potentially misleading — picture of impact. Interventions designed to improve resilience or livelihoods may inadvertently undermine the ecological foundations on which those livelihoods depend. Without a systems lens, evaluations risk missing these contradictions — and thus misjudging both impact and sustainability.

When does the environmental dimension matter most?

While all development interventions have environmental implications, some clearly demand deeper analysis. These include, first, interventions with explicit environmental objectives, such as protecting a natural habitat or reducing chemical pollution. In such cases, assessing the environmental impacts is obviously central.

Second are interventions whose success depends on a healthy environment, even if environmental improvement is not an explicit goal. Nowhere is the need for integrated evaluation clearer than in agriculture and rural development. Agriculture sustains billions of livelihoods, yet it is also a major driver of deforestation, water stress, biodiversity loss, and greenhouse gas emissions. Around three billion people live in areas highly vulnerable to climate change, many of them smallholder farmers, pastoralists, and fishers. Women, Indigenous peoples, and marginalized groups are disproportionately affected. By 2030, drought alone could displace hundreds of millions of people.

At the same time, nature underpins food systems. Healthy soils regulate water and store carbon. Pollinators support the majority of global food crops. Forests and wetlands buffer communities against climate shocks. When these systems are degraded, food security and incomes suffer.

Third are interventions that use or alter natural resources, like land, water or forests, or are implemented near environmentally sensitive areas. Large-scale or long-term projects, in particular, can disrupt established patterns of resource use, wildlife movement, or waste generation, with cascading effects over time.

Finally, any intervention that introduces pollutants — whether chemicals, waste, heat, noise, or biological agents — should assess environmental consequences. Pollution is not limited to toxic substances but includes anything that degrades ecosystems or makes them less hospitable to life qualifies.

Drawing system boundaries — and asking the right questions

A systems-based evaluation begins by situating the intervention within its broader context. This includes policies, markets, institutions, and other programs operating in the same space. Too often, well-intentioned initiatives work at cross-purposes, undermining each other’s effects.

Defining system boundaries is not about including everything; it is about including what matters. Crucially, systems thinking pushes evaluators beyond asking whether activities were implemented as planned. It asks whether the intervention actually made a difference to the problem it set out to address, and whether that difference is likely to last.

What distinguishes systems-focused evaluations is their attention to interactions across space and time. Environmental effects may manifest far from project sites or long after implementation ends. Conversely, external environmental changes — such as climate shocks or land-use change — may shape project outcomes in ways that standard evaluations overlook. Expanding the analytical lens makes unintended effects more visible.

Systems thinking also shifts attention from symptoms to root causes. Environmental degradation is rarely driven by local behavior alone. Economic incentives, agricultural policies, land tenure systems, and market forces all shape how resources are used. Evaluations that ignore these drivers risk overstating the expected results while underestimating the risks to sustainability.

Working across disciplines — and worldviews

Environmental issues cut across sectors and disciplines, from agronomy and ecology to economics, engineering, and public policy. Evaluators therefore need to engage with expertise beyond their own comfort zones. This includes not only scientists, but also Indigenous peoples and local communities, who often possess the most intimate knowledge of local ecosystems and livelihoods.

Bringing these perspectives together is not easy. Different disciplines and stakeholder groups use different languages, value systems, and ways of knowing. Facilitation and translation are therefore as important as technical methods. The goal is not to force consensus, but to build a coherent picture of how the system functions and how the intervention has interacted with it.

Methods fit for complexity

A systems perspective does not prescribe specific methods, but it does influence how methods are chosen. The starting point should always be the evaluation questions, not the evaluator’s toolkit. Methods that are particularly useful for tracing how interventions contribute to change in complex, non-linear systems, offer alternatives to overly linear cause-and-effect models that rarely reflect reality.

Geospatial approaches deserve special mention. Satellite imagery, remote sensing, and spatial analysis can fill critical data gaps, especially in large, remote, or insecure areas. They allow evaluators to track land-use change, deforestation, vegetation cover, and infrastructure and settlement expansion over time, and even reconstructing baselines retrospectively.

However, geospatial data show what changed, not necessarily why. Social, economic, and institutional mechanisms still require qualitative inquiry, fieldwork, and stakeholder engagement. The strongest evaluations combine geospatial evidence with more traditional methods.

Evaluation for a world of limits

Evaluation must evolve if it is to remain credible and useful in a world defined by environmental limits. This does not mean turning every evaluation into an ecological impact assess]ment, nor does it imply adding complexity for its own sake. It means recognizing a basic reality: development outcomes and environmental sustainability are inseparable. Interventions that degrade ecosystems may deliver short-term gains, but they ultimately undermine the resilience and equity they seek to promote. Conversely, efforts to protect nature will not endure unless they also improve human well-being. A systems perspective — one that takes human and natural systems seriously, together — allows evaluation to move from counting outputs to understanding lasting change. In an era of climate risk, biodiversity loss, and constrained resources, this is not simply a methodological choice. It is a responsibility.

[This piece draws on an article by Jeneen R. Garcia and Juha I. Uitto in Evaluation and Program Planning journal in 2025.]